A machine learning approach to decoding ECoG signals for vowel classification

Author: tschnoor

— course project — 7 min read| Code Repository URL | https://github.com/uazhlt-ms-program/ling-582-fall-2025-course-project-code-ecog-speech-decoding |

|---|---|

| Demo URL (optional) | |

| Team name | ECoG Speech Decoding |

Project description

See the rubric. Use subsections to organize components of the description.

Overview

This project aims to make a neural network electrocorticography (ECoG) formant decoder. ECoG signals are obtained by implanting hundreds of small electrodes deep within the brain to record electrical activity. This methodology has the potential to be useful in a variety of practical applications, particularly as a brain-computer interface. One area of interest is in restoring speech communication by decoding ECoG signals and using the decoded features to reconstruct an acoustic signal. Artificial neural networks are the current standard for ECoG decoding.

The specific aim of the project is to create a neural network ECoG decoder that decodes ECoG -> normalized formant frequencies. For example, the model might take a window of 32 samples of 15 x 15 electrode readings as input and its task would be to predict the frequencies of the first two formants at the end of the window. I aim to use a modified version of the ResNet architecture for the decoder, heavily inspired by the study described below. The intention behind this system is to use it in conjunction with an acoustic waveguide model of the vocal tract to simulate speech. The end result would be a more interpretable pipeline from cortical signals to speech that could be used to model the entire speech production process.

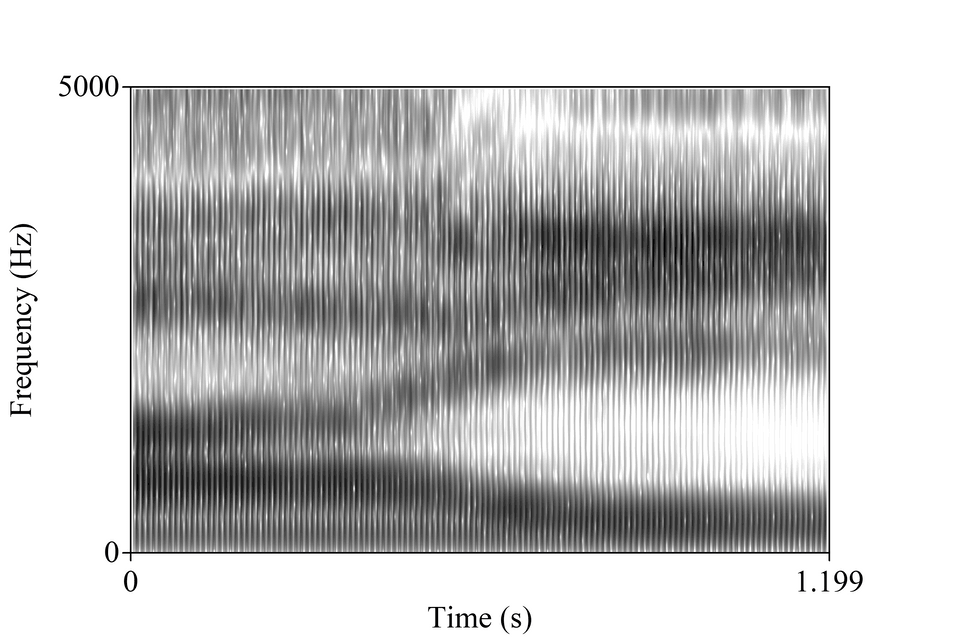

The formants are the dark bands in this image, called a spectrogram.

Related work and the state of the art

The gold standard of speech restoration is real-time decoding and reconstruction so that a speech neuroprosthesis can be used to communicate in a naturalistic way. The data used in this project come from a study by Chen et al. (2024) that represents one of the most recent innovations in the field. The data are constituted by ECoG signals, audio recordings, and some derived features of the audio (e.g., formant tracks) that were all collected while the participants spoke words aloud. The study aimed to create a real-time speech neuroprosthesis that could restore speech communication for people with severe motor impairments. Their methodology involved training two neural networks with different purposes. The first was an ECoG -> Speech features decoder. The next network was a speech auto encoder that reconstructed an acoustic signal from the decoded speech features. The present project focuses on the first of these two networks, though I certainly grew to appreciate the necessity of the second (see section "Challenges of the task").

An overview of the Chen et al. study.

Novelty and motivation

This project uses interpretable acoustic features as the speech representation. This is in contrast to most approaches, which use abstract features or features which are only loosely related to certain qualities of spoken language. An additional novelty associated with this project is the intention to use decoded features with an acoustic waveguide model. A full description of the acoustic waveguide algorithm is outside the scope of this blog post, but the model can be thought of as a physics-based model of speech. It can synthesize intelligible speech, much like other models can, but it is also a simulation of speech, as it calculates the propagation of pressure waves through a digital vocal tract. The model is therefore useful for doing science because its parameters and calculations are interpretable analogues to natural speech production.

Such a model also has its drawbacks. For one, it is only useful if it can be controlled. Choosing the right parameters to emulate a speech recording is a labor-intensive and finicky task. The vision with this project is to decode one of the parameters for the model automatically from cortical activity so that the speech chain (from brain to acoustics) can be studied.

There would also be significant practical applications for such a pipeline. As in the previously described paper, an ECoG decoder + speech synthesizer pairing could provide real-time speech restoration to those with a speech impairment. Using a waveguide model would also provide significant benefits here over other synthesis methods. For example, if the BCI user is young and still growing but has already lost the ability to speak naturally, the model parameters could be adjusted to scale the size of the vocal tract with age, producing a deeper, more age appropriate fundamental frequency. Achieving the same effect with a different system may require artificially lowering the pitch of training data and then retraining networks, which would require far more work and likely yield a less natural result.

Challenges of the task

This is a highly challenging task that is unlikely to be performant in a condensed form like this project. The first challenge is that the task involves the extraction of interpretable but low-dimensional information from high-dimensional cortical signals. The fear is that the desired information could get "lost" in all of the input data.

Neurosignal decoding is an inherently difficult task because it is noisy. There is no guarantee that a particular electrode channel or region of electrode channels correspond to any particular feature. Signals can be complicated by a distracted participant, a participant error, faulty electrode, or interference from other equipment.

Perhaps the most significant challenge (and it is one that I didn't know about until I had started this project) is that the formant tracks provided by the authors are extremely innacurate. At first I assumed that, because the authors experienced such success with their system, the formant tracks--which are used as input parameters to their own speech synthesizer--must be quite accurate. However, I failed to account for just how much the network could compensate for formant tracking errors. The "black box" nature of their system means that it is difficult to say how much information their synthesizer even gleans from the formant parameters. It may simply rely on the other, more accurate parameters to synthesize speech. The beauty of the two-network system is that the speech synthesis network can learn to compensate for inconsistencies in provided parameters. It accomplishes this by comparing its output to the natural recording directly, through Short-Time Objective Intelligibility loss.

A note about why I didn't proceed with the plan to make a vowel classifier

In my original proposal, I proposed a project that centered around making a vowel classifier with ECoG inputs. However, I soon realized that I did not have enough examples of each vowel (<100 each) to train a good model. The project I went with also didn't really work, so I am sort of second-guessing changing course. Regardless, I felt like I should explain myself.

Procedure

Here is a list of things that I did as I tried to make this project work:

- I varied the window and step size

- I tried variations with 64 samples per window down to 32 samples per window (they use 64 in the reference paper)

- I tried different step sizes from 1 to window_size/2

- I varied the architecture

- I tried with the architecture presented in the paper

- I tried with a simplified architecture (~30% of layers removed)

- I tried with different numbers of channels in the convolutional layers

- Overall, simpler seemed to be better, which makes sense given the amount of overfitting you are about to see in the next section

- I tried modeling the formant values as time series rather than a stationary target

- This involved swapping the dense layers at the end with more convolutions that reduced the size down to the desired 2 x 1

- This didn't seem to help at the time, but I should try this again in combination with some of the other changes I made.

- I made sure to normalize and center the formant values around zero. This places most of the data between -1 and 1

- I didn't try using the raw formant values, but I imagine that would have made things worse

_Note: There is no residual block layer built in to Flux.jl, which is the main machine learning library in Julia. I wrote my own but based it heavily on this implementation by Anton Smirnov. The main difference being that his is meant for 2D image data, while mine is adjusted to work with 3D "video" data.

Summary of individual contributions

| Team member | Role/contributions |

|---|---|

| Tyler Schnoor | Everything |

Results

See the rubric

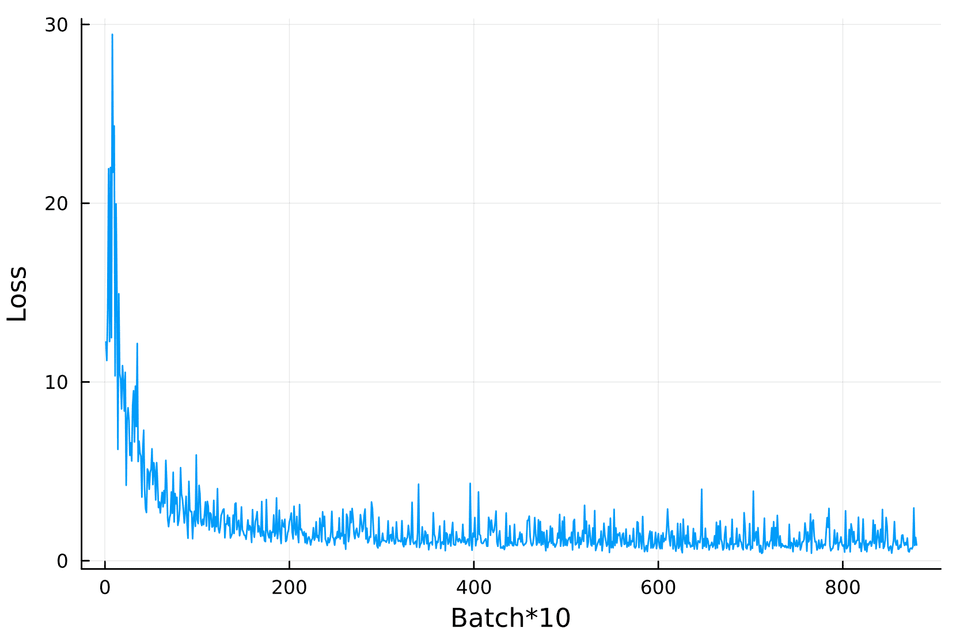

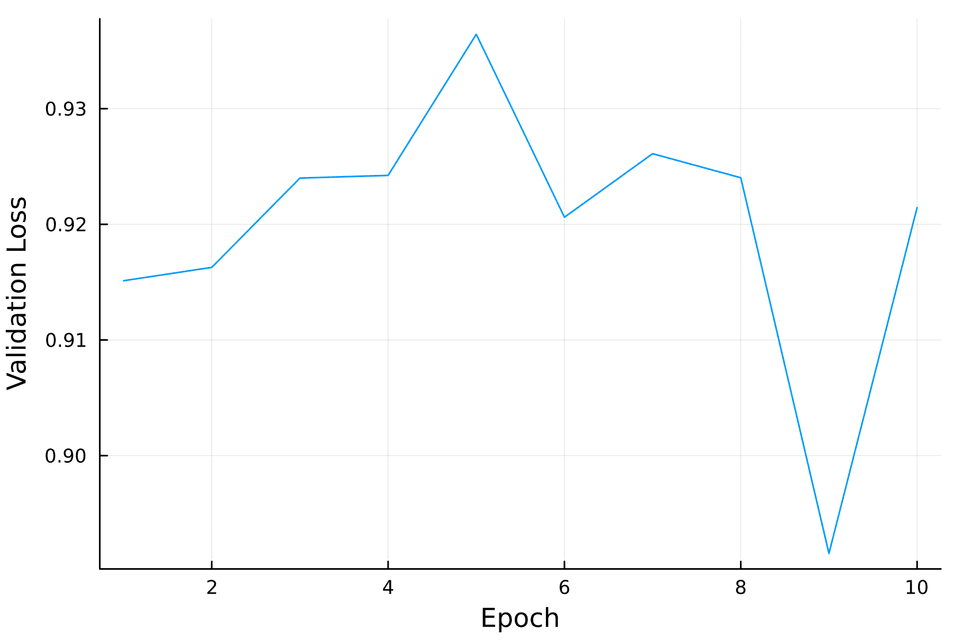

Below are the results of my best training run. The training loss, logged every 10 batches can be seen on the left, while the validation loss by epoch on a 20% holdout set is shown on the right. These show that the loss never drops below ~0.9. That is high considering that it is plots show RMSE.

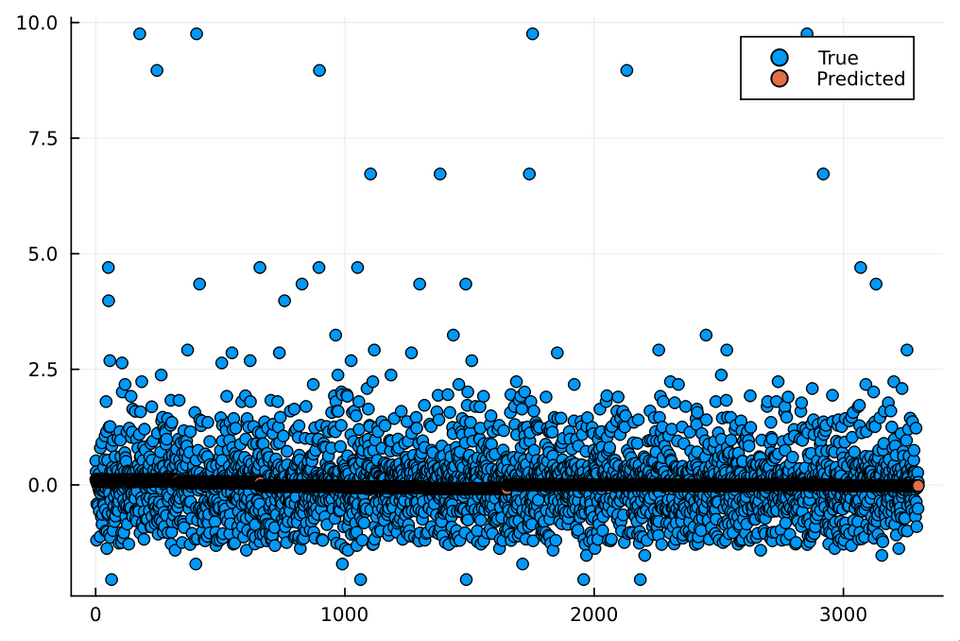

Unfortunately, upon closer evaluation, it is obvious that the model has simply learned to predict the mean:

Error analysis

See the rubric

Error analysis gets a little awkward now that we know the model is just guessing ~0 every time. Even still, I'm going to go through the motions. Here are some error measurements on the first formant, for example.

1julia> mae = mean(abs.(y_true .- y_pred))20.58739268767508873

4julia> rmse = sqrt(mean((y_true .- y_pred).^2))50.94362361728351786

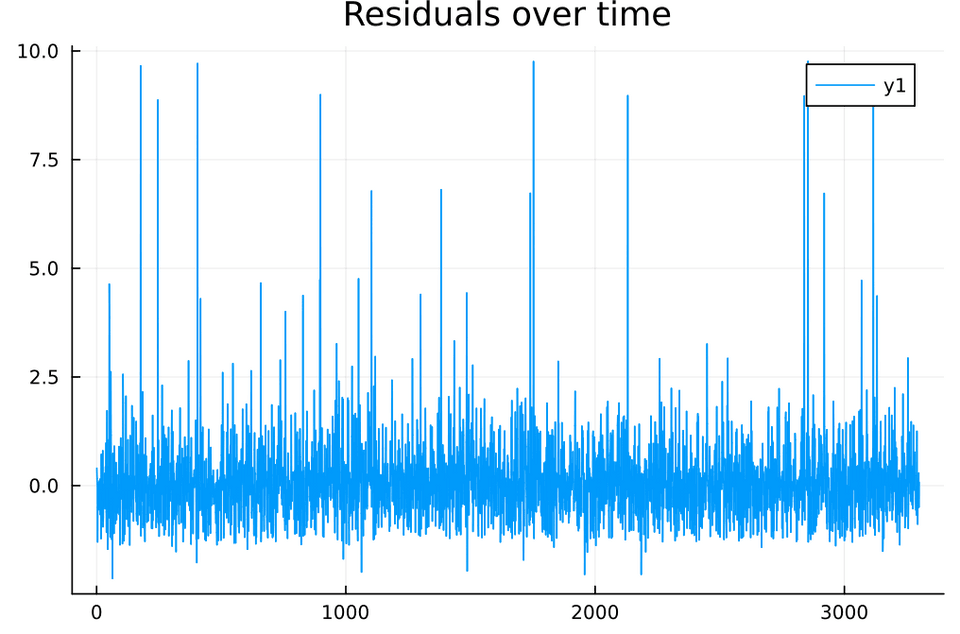

7julia> mape = mean(abs.((y_true .- y_pred) ./ y_true)) * 1008229.95062746555342Because we have two timeseries to compare, it makes sense to look at the residuals between them. This plot just shows that the residuals are largely unchanging. The spikes occur when we get outliers from bad formant tracks.

Reproducibility

See the rubric If you'ved covered this in your code repository's README, you can simply link to that document with a note.

I have containerized my code. To reproduce my results, follow the instructions in the README here.

Future improvements

See the rubric

Ultimately, this project did not work. The system is severely limited by the inaccuracy of the formant values on which it was trained. In addition, a 2 x 1 (two formant values per window) representation of the speech process may not be descriptive enough for this sort of task. As seems to so often be the case, I also think I was limited by the amount of data I had to work with. Even though I could extract thousands of windows to train the model, many of these windows were similar to one another (sliding windows with a step size of one, for example) and may have starved the system of real diversity. I can also now see that drastically reducing the dimensionality of the speech representation from many features to only two AND keeping the complexity of the model high was a bad call. I did reduce this complexity in later runs, but obviously there is more work to be done.

These limitations could be improved upon. Formant tracks could be manually corrected or automatically extracted through more robust means. I also think that a more rich representation of the speech signal would aid training (e.g., if the rest of the parameters for the waveguide model could be used as our "y"). Although I did test some of these parameter changes, it would also be interesting to do a more comprehensive evaluation of optimal window sizes, step sizes for sliding windows, feature channels in the convolutional layers, etc. More specifically, my best results came from simplifying the model, so I'd like to do this more and record the results. Unfortunately, unless the authors of the source paper begin to feel more generous, it is unlikely I will obtain more of this already rare data. However, there are many things I could do to refine the data that I do have access to. Or, one next step could be devising my own speech autoencoder, but using acoustic waveguide parameters.